MLIR Toy Tutorial

1. Toy and AST

Toy语言,一个基于tensor的语言,可以定义函数,执行一些数学运算和输出结果。简单起见,tensor的rank <= 2,只有f64一种类型,并且值不可修改,需要新创建,并自动管理其释放,这一点类似于函数式编程语言。一个例子

def main() {

# Define a variable `a` with shape <2, 3>, initialized with the literal value.

# The shape is inferred from the supplied literal.

var a = [[1, 2, 3], [4, 5, 6]];

# b is identical to a, the literal tensor is implicitly reshaped: defining new

# variables is the way to reshape tensors (element count must match).

var b<2, 3> = [1, 2, 3, 4, 5, 6];

# transpose() and print() are the only builtin, the following will transpose

# a and b and perform an element-wise multiplication before printing the result.

print(transpose(a) * transpose(b));

}Type checking is statically performed through type inference.

Toy语言只续在必要时指定张量形状的Type Declaration。函数是一个泛型函数,参数的类型是不知道的,只有调用时会特化。

# User defined generic function that operates on unknown shaped arguments.

def multiply_transpose(a, b) {

return transpose(a) * transpose(b);

}

def main() {

# Define a variable `a` with shape <2, 3>, initialized with the literal value.

var a = [[1, 2, 3], [4, 5, 6]];

var b<2, 3> = [1, 2, 3, 4, 5, 6];

# This call will specialize `multiply_transpose` with <2, 3> for both

# arguments and deduce a return type of <3, 2> in initialization of `c`.

var c = multiply_transpose(a, b);

# A second call to `multiply_transpose` with <2, 3> for both arguments will

# reuse the previously specialized and inferred version and return <3, 2>.

var d = multiply_transpose(b, a);

# A new call with <3, 2> (instead of <2, 3>) for both dimensions will

# trigger another specialization of `multiply_transpose`.

var e = multiply_transpose(c, d);

# Finally, calling into `multiply_transpose` with incompatible shapes

# (<2, 3> and <3, 2>) will trigger a shape inference error.

var f = multiply_transpose(a, c);

}使用以下命令生成Toy程序的AST

cd llvm-project/build/bin

./toyc-ch1 ../../mlir/test/Examples/Toy/Ch1/ast.toy --emit=ast如下

Module:

Function

Proto 'multiply_transpose' @../../mlir/test/Examples/Toy/Ch1/ast.toy:4:1

Params: [a, b]

Block {

Return

BinOp: * @../../mlir/test/Examples/Toy/Ch1/ast.toy:5:25

Call 'transpose' [ @../../mlir/test/Examples/Toy/Ch1/ast.toy:5:10

var: a @../../mlir/test/Examples/Toy/Ch1/ast.toy:5:20

]

Call 'transpose' [ @../../mlir/test/Examples/Toy/Ch1/ast.toy:5:25

var: b @../../mlir/test/Examples/Toy/Ch1/ast.toy:5:35

]

} // Block

Function

Proto 'main' @../../mlir/test/Examples/Toy/Ch1/ast.toy:8:1

Params: []

Block {

VarDecl a<> @../../mlir/test/Examples/Toy/Ch1/ast.toy:11:3

Literal: <2, 3>[ <3>[ 1.000000e+00, 2.000000e+00, 3.000000e+00], <3>[ 4.000000e+00, 5.000000e+00, 6.000000e+00]] @../../mlir/test/Examples/Toy/Ch1/ast.toy:11:11

VarDecl b<2, 3> @../../mlir/test/Examples/Toy/Ch1/ast.toy:15:3

Literal: <6>[ 1.000000e+00, 2.000000e+00, 3.000000e+00, 4.000000e+00, 5.000000e+00, 6.000000e+00] @../../mlir/test/Examples/Toy/Ch1/ast.toy:15:17

VarDecl c<> @../../mlir/test/Examples/Toy/Ch1/ast.toy:19:3

Call 'multiply_transpose' [ @../../mlir/test/Examples/Toy/Ch1/ast.toy:19:11

var: a @../../mlir/test/Examples/Toy/Ch1/ast.toy:19:30

var: b @../../mlir/test/Examples/Toy/Ch1/ast.toy:19:33

]

VarDecl d<> @../../mlir/test/Examples/Toy/Ch1/ast.toy:22:3

Call 'multiply_transpose' [ @../../mlir/test/Examples/Toy/Ch1/ast.toy:22:11

var: b @../../mlir/test/Examples/Toy/Ch1/ast.toy:22:30

var: a @../../mlir/test/Examples/Toy/Ch1/ast.toy:22:33

]

VarDecl e<> @../../mlir/test/Examples/Toy/Ch1/ast.toy:25:3

Call 'multiply_transpose' [ @../../mlir/test/Examples/Toy/Ch1/ast.toy:25:11

var: c @../../mlir/test/Examples/Toy/Ch1/ast.toy:25:30

var: d @../../mlir/test/Examples/Toy/Ch1/ast.toy:25:33

]

VarDecl f<> @../../mlir/test/Examples/Toy/Ch1/ast.toy:28:3

Call 'multiply_transpose' [ @../../mlir/test/Examples/Toy/Ch1/ast.toy:28:11

var: a @../../mlir/test/Examples/Toy/Ch1/ast.toy:28:30

var: c @../../mlir/test/Examples/Toy/Ch1/ast.toy:28:33

]

} // Block词法解析与语法解析在以下路径

examples/toy/Ch1/include/toy/Lexer.h

examples/toy/Ch1/include/toy/Parser.h2. Emitting Basic MLIR

2.1 Intro

其他编译器,如 LLVM,提供了一组固定的预定义类型和(通常是低级/类 RISC)指令。在输出 LLVM IR 之前,特定语言的前端需要执行任何特定语言的类型检查、分析或转换。例如,Clang 不仅会使用其 AST 进行static analysis,还会进行transformations,如通过 AST 克隆和重写进行 C++ 模板实例化。最后,在比 C/C++ 更高层次上进行构造的语言可能需要对其 AST 进行non-trivial lowering,才能生成 LLVM IR。

因此,多个前端最终都要重新实现大量的基础架构,以支持这些分析和转换的需要。MLIR 通过设计可扩展性解决了这一问题。因此,很少有预定义的指令(MLIR 术语中的操作)或类型。

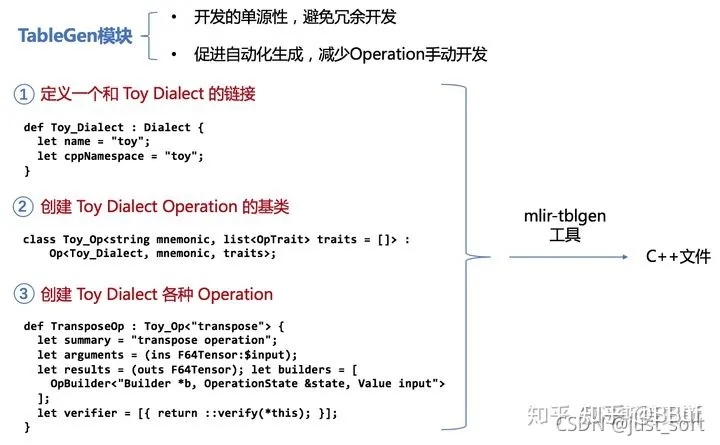

MLIR是一个完全可扩展的基础架构,没有封闭的属性集、操作和类型。通过Dialect来支持可扩展性,方言提供了一种分组机制,可在特定的namespace下进行抽象。

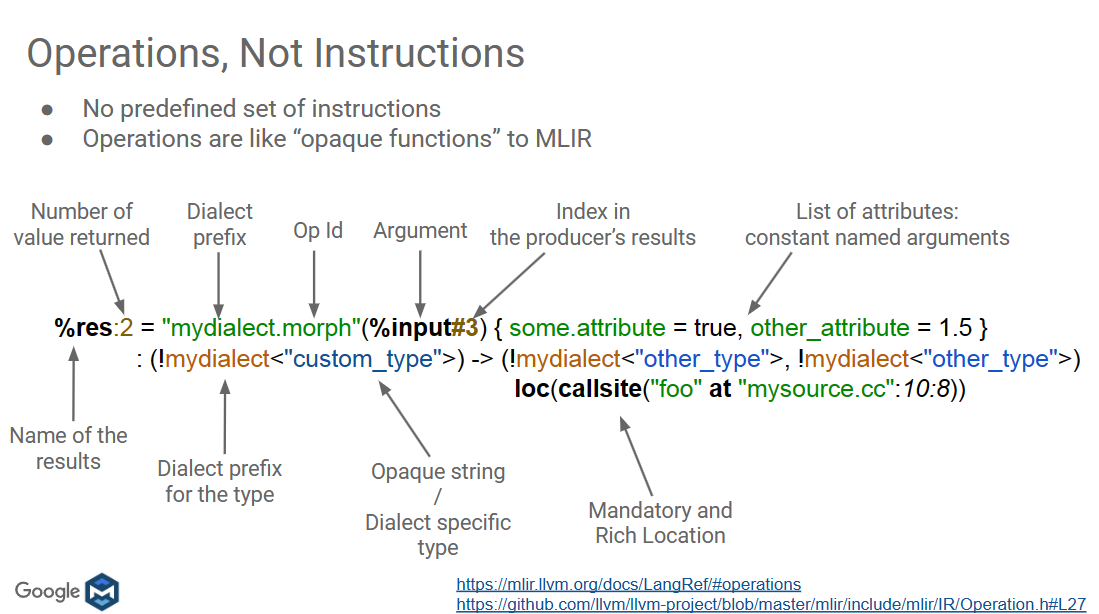

MLIR中Operations是一个Abstraction和Computaion核心单元,和LLVM指令类似。

%t_tensor = "toy.transpose"(%tensor) {inplace = true} : (tensor<2x3xf64>) -> tensor<3x2xf64> loc("example/file/path":12:1)注意到MLIR中有一个loc,loc是MLIR的核心要求,APIs依赖并操作它,除非显式drop,如果一个operation被转换成另一个,可以通过loc来跟踪。

2.2 Defining a Toy Dialect

C++定义

/// This is the definition of the Toy dialect. A dialect inherits from

/// mlir::Dialect and registers custom attributes, operations, and types. It can

/// also override virtual methods to change some general behavior, which will be

/// demonstrated in later chapters of the tutorial.

class ToyDialect : public mlir::Dialect {

public:

explicit ToyDialect(mlir::MLIRContext *ctx);

/// Provide a utility accessor to the dialect namespace.

static llvm::StringRef getDialectNamespace() { return "toy"; }

/// An initializer called from the constructor of ToyDialect that is used to

/// register attributes, operations, types, and more within the Toy dialect.

void initialize();

};TableGen定义

// Provide a definition of the 'toy' dialect in the ODS framework so that we

// can define our operations.

def Toy_Dialect : Dialect {

// The namespace of our dialect, this corresponds 1-1 with the string we

// provided in `ToyDialect::getDialectNamespace`.

let name = "toy";

// A short one-line summary of our dialect.

let summary = "A high-level dialect for analyzing and optimizing the "

"Toy language";

// A much longer description of our dialect.

let description = [{

The Toy language is a tensor-based language that allows you to define

functions, perform some math computation, and print results. This dialect

provides a representation of the language that is amenable to analysis and

optimization.

}];

// The C++ namespace that the dialect class definition resides in.

let cppNamespace = "toy";

}使用mlir-tblgen选择gen-dialect-decls可查看生成的C++ source code

${build_root}/bin/mlir-tblgen -gen-dialect-decls ${mlir_src_root}/examples/toy/Ch2/include/toy/Ops.td -I ${mlir_src_root}/include/如下

namespace mlir {

namespace toy {

class ToyDialect : public ::mlir::Dialect {

explicit ToyDialect(::mlir::MLIRContext *context);

void initialize();

friend class ::mlir::MLIRContext;

public:

~ToyDialect() override;

static constexpr ::llvm::StringLiteral getDialectNamespace() {

return ::llvm::StringLiteral("toy");

}

};

} // namespace toy

} // namespace mlir

MLIR_DECLARE_EXPLICIT_TYPE_ID(::mlir::toy::ToyDialect)2.3 Defining Toy Operations

创建一个toy.constant Operation表示常量

%4 = "toy.constant"() {value = dense<1.0> : tensor<2x3xf64>} : () -> tensor<2x3xf64>An operation class inherits from the CRTP

mlir::Op class which also takes some optional traits

to customize its behavior.Traits are a mechanism with which

we can inject additional behavior into an Operation, such as additional

accessors, verification, and more.

class ConstantOp : public mlir::Op<

/// `mlir::Op` is a CRTP class, meaning that we provide the

/// derived class as a template parameter.

ConstantOp,

/// The ConstantOp takes zero input operands.

mlir::OpTrait::ZeroOperands,

/// The ConstantOp returns a single result.

mlir::OpTrait::OneResult,

/// We also provide a utility `getType` accessor that

/// returns the TensorType of the single result.

mlir::OpTraits::OneTypedResult<TensorType>::Impl> {

public:

/// Inherit the constructors from the base Op class.

using Op::Op;

/// Provide the unique name for this operation. MLIR will use this to register

/// the operation and uniquely identify it throughout the system. The name

/// provided here must be prefixed by the parent dialect namespace followed

/// by a `.`.

static llvm::StringRef getOperationName() { return "toy.constant"; }

/// Return the value of the constant by fetching it from the attribute.

mlir::DenseElementsAttr getValue();

/// Operations may provide additional verification beyond what the attached

/// traits provide. Here we will ensure that the specific invariants of the

/// constant operation are upheld, for example the result type must be

/// of TensorType and matches the type of the constant `value`.

LogicalResult verifyInvariants();

/// Provide an interface to build this operation from a set of input values.

/// This interface is used by the `builder` classes to allow for easily

/// generating instances of this operation:

/// mlir::OpBuilder::create<ConstantOp>(...)

/// This method populates the given `state` that MLIR uses to create

/// operations. This state is a collection of all of the discrete elements

/// that an operation may contain.

/// Build a constant with the given return type and `value` attribute.

static void build(mlir::OpBuilder &builder, mlir::OperationState &state,

mlir::Type result, mlir::DenseElementsAttr value);

/// Build a constant and reuse the type from the given 'value'.

static void build(mlir::OpBuilder &builder, mlir::OperationState &state,

mlir::DenseElementsAttr value);

/// Build a constant by broadcasting the given 'value'.

static void build(mlir::OpBuilder &builder, mlir::OperationState &state,

double value);

};在ToyDialect initialize中注册operation

void ToyDialect::initialize() {

addOperations<ConstantOp>();

}2.4 Op vs Operation

Op是Operation的智能指针装饰器

void processConstantOp(mlir::Operation *operation) {

ConstantOp op = llvm::dyn_cast<ConstantOp>(operation);

// This operation is not an instance of `ConstantOp`.

if (!op)

return;

// Get the internal operation instance wrapped by the smart pointer.

mlir::Operation *internalOperation = op.getOperation();

assert(internalOperation == operation &&

"these operation instances are the same");

}2.5 ODS

定义Operation的基类,Toy_Op

// Base class for toy dialect operations. This operation inherits from the base

// `Op` class in OpBase.td, and provides:

// * The parent dialect of the operation.

// * The mnemonic for the operation, or the name without the dialect prefix.

// * A list of traits for the operation.

class Toy_Op<string mnemonic, list<Trait> traits = []> :

Op<Toy_Dialect, mnemonic, traits>;ODS定义的ConstantOp

def ConstantOp : Toy_Op<"constant"> {

...

// Add custom build methods for the constant operation. These methods populate

// the `state` that MLIR uses to create operations, i.e. these are used when

// using `builder.create<ConstantOp>(...)`.

let builders = [

// Build a constant with a given constant tensor value.

OpBuilder<(ins "DenseElementsAttr":$value), [{

// Call into an autogenerated `build` method.

build(builder, result, value.getType(), value);

}]>,

// Build a constant with a given constant floating-point value. This builder

// creates a declaration for `ConstantOp::build` with the given parameters.

OpBuilder<(ins "double":$value)>

];

}可通过以下命令查看

./mlir-tblgen -gen-op-defs ../../examples/toy/Ch2/include/toy/Ops.td -I ../../mlir/include/2.6 Summary

通过以下命令生成MLIR

./toyc-ch2 ../../mlir/test/Examples/Toy/Ch2/codegen.toy -emit=mlir -mlir-print-debuginfo如下

module {

toy.func @multiply_transpose(%arg0: tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":4:1), %arg1: tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":4:1)) -> tensor<*xf64> {

%0 = toy.transpose(%arg0 : tensor<*xf64>) to tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":5:10)

%1 = toy.transpose(%arg1 : tensor<*xf64>) to tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":5:25)

%2 = toy.mul %0, %1 : tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":5:25)

toy.return %2 : tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":5:3)

} loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":4:1)

toy.func @main() {

%0 = toy.constant dense<[[1.000000e+00, 2.000000e+00, 3.000000e+00], [4.000000e+00, 5.000000e+00, 6.000000e+00]]> : tensor<2x3xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":9:17)

%1 = toy.reshape(%0 : tensor<2x3xf64>) to tensor<2x3xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":9:3)

%2 = toy.constant dense<[1.000000e+00, 2.000000e+00, 3.000000e+00, 4.000000e+00, 5.000000e+00, 6.000000e+00]> : tensor<6xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":10:17)

%3 = toy.reshape(%2 : tensor<6xf64>) to tensor<2x3xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":10:3)

%4 = toy.generic_call @multiply_transpose(%1, %3) : (tensor<2x3xf64>, tensor<2x3xf64>) -> tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":11:11)

%5 = toy.generic_call @multiply_transpose(%3, %1) : (tensor<2x3xf64>, tensor<2x3xf64>) -> tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":12:11)

toy.print %5 : tensor<*xf64> loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":13:3)

toy.return loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":8:1)

} loc("../../mlir/test/Examples/Toy/Ch2/codegen.toy":8:1)

} loc(unknown)

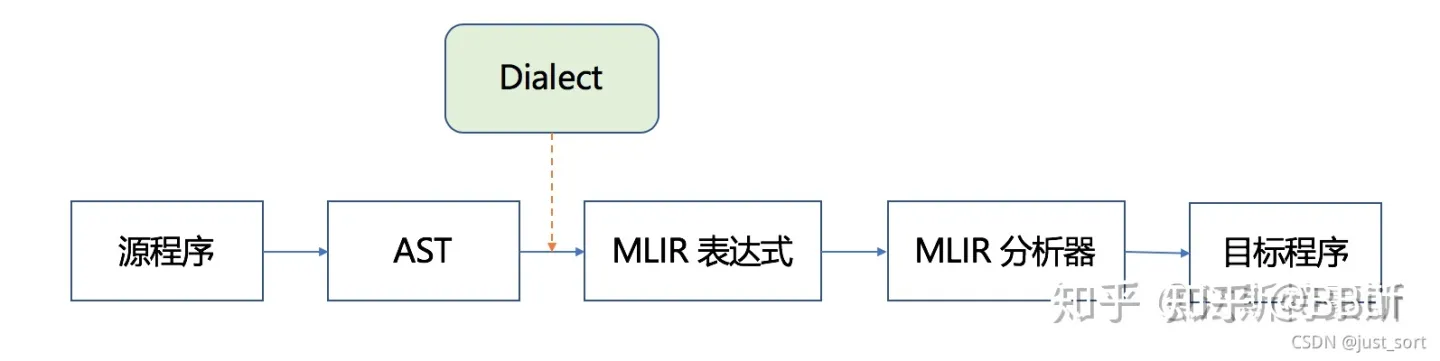

我们需要弄清楚codegen.toy是如何产生的MLIR文件。也即下图的AST到MLIR表达式那部分(包含Dialect)。

这里首先有一个MLIRGen函数负责遍历AST。在mlir/examples/toy/Ch2/mlir/MLIRGen.cpp文件中实现,里面有一个mlirGen函数,实现如下:

/// Dispatch codegen for the right expression subclass using RTTI.

mlir::Value mlirGen(ExprAST &expr) {

switch (expr.getKind()) {

case toy::ExprAST::Expr_BinOp:

return mlirGen(cast<BinaryExprAST>(expr));

case toy::ExprAST::Expr_Var:

return mlirGen(cast<VariableExprAST>(expr));

case toy::ExprAST::Expr_Literal:

return mlirGen(cast<LiteralExprAST>(expr));

case toy::ExprAST::Expr_Call:

return mlirGen(cast<CallExprAST>(expr));

case toy::ExprAST::Expr_Num:

return mlirGen(cast<NumberExprAST>(expr));

default:

emitError(loc(expr.loc()))

<< "MLIR codegen encountered an unhandled expr kind '"

<< Twine(expr.getKind()) << "'";

return nullptr;

}

}这个函数会根据AST中的节点类型递归调用其它的mlirGen子函数,并在各个子函数完成真正的转换MLIR表达式的操作。以上面codege.toy的transpose(a)操作为例,对应的mlirGen子函数

/// Emit a call expression. It emits specific operations for the `transpose`

/// builtin. Other identifiers are assumed to be user-defined functions.

mlir::Value mlirGen(CallExprAST &call) {

llvm::StringRef callee = call.getCallee();

auto location = loc(call.loc());

// Codegen the operands first.

SmallVector<mlir::Value, 4> operands;

for (auto &expr : call.getArgs()) {

auto arg = mlirGen(*expr);

if (!arg)

return nullptr;

operands.push_back(arg);

}

// Builtin calls have their custom operation, meaning this is a

// straightforward emission.

if (callee == "transpose") {

if (call.getArgs().size() != 1) {

emitError(location, "MLIR codegen encountered an error: toy.transpose "

"does not accept multiple arguments");

return nullptr;

}

return builder.create<TransposeOp>(location, operands[0]);

}

// Otherwise this is a call to a user-defined function. Calls to

// user-defined functions are mapped to a custom call that takes the callee

// name as an attribute.

return builder.create<GenericCallOp>(location, callee, operands);

}我们可以看到if (callee == "transpose")这里是对函数签名进行判断,如果是transpose

那么就需要新建一个TransposeOp类型的MLIR节点,即builder.create<TransposeOp>(location, operands[0])